Author: Satya Surabhi, PhD

Research Assistant Professor, Albert Einstein College of Medicine, New York, USA.

Editor: Paramita Sarkar, PhD

Postdoctoral Fellow, NIH, USA

The relationship between the human brain and modern computational systems is bidirectional — as they continue to inspire and accelerate each other. Artificial neural networks were originally developed based on how neurons connect and communicate in biological systems. In turn, modern computational tools now allow researchers to analyze large-scale data and identify patterns far faster than traditional methods.

In 2024, this connection was formally recognized when the Nobel Prize in Physics was awarded to John J. Hopfield and Geoffrey E. Hinton for foundational discoveries and inventions that enable machine learning with artificial neural networks (Nobel Prize in Physics).

How Does the Brain Inspire Computation?

Artificial intelligence began with a deceptively simple question: Can machines think like the brain?

This question gradually reshaped how computational systems are designed. Modern artificial intelligence systems increasingly draw inspiration from neural mechanisms underlying brain function, employing layered processing architectures that enable image recognition, speech understanding, strategic game playing, and medical diagnosis. Reinforcement-based learning reflects dopamine-driven reward mechanisms in the brain (Schultz et al., 1997), while Hebbian learning captures how repeated activation strengthens synaptic connections and forms the basis of learning (Hebb, 1949). This principle is often summarized by the phrase coined by Carla Shatz: neurons that fire together, wire together (Shatz, 1992).

While these ideas are not purely theoretical, they are deeply rooted in biology. Studies of animals such as mice, flies, and primates have provided critical insights into how intelligence emerges from networks of simple interacting units, directly influencing the development of more adaptive and flexible computational systems (Kandel et al., 2013). In this way, neuroscience remains one of the most powerful foundations of modern computation.

How Does Computation Advance Brain Research?

“The brain holds the secrets, and computation is helping unlock them.”

Computational methods now enable researchers to analyze large datasets, map brain activity, identify biomarkers of disease, and support early diagnosis of neurological disorders. Advances such as Alpha Fold has enabled rapid prediction of protein structures, accelerating biological discovery across many fields including neuroscience (Jumper et al., 2021; Tunyasuvunakool et al., 2021).

In parallel large-scale computational methods have also contributed to mapping neural connectivity and improving brain imaging analysis and simulations (Sporns, 2010). Together, these advances allow neuroscience to operate at a scale and resolution that was unimaginable a decade ago.

Limits of the Brain–Computation Analogy

They may appear similar at first glance, but biological and artificial systems differ in a fundamental way: nature does not rely on software updates.

Most computational models depend on global optimization methods such as backpropagation, introduced by David Rumelhart and colleagues (1986), whereas the brain relies on local, distributed, and continuously evolving learning mechanisms. The brain is also remarkably energy efficient, operating on roughly 20 watts, as estimated by David Attwell and Simon Laughlin (2001), while large-scale AI systems consume orders of magnitude more power. Additionally, the brain continuously adapts its synapses, responsiveness, and chemical composition — whereas most computational models simply adjust fixed parameters during training.

To bridge this gap, researchers are developing neuromorphic computing systems that more closely emulate biological principles. Examples include Intel Loihi, IBM TrueNorth, SpiNNaker, and BrainChip Akida, which implement spiking neural architectures aimed at achieving more energy-efficient and brain-like computation, as discussed by Giacomo Indiveri and colleagues (2011).

India’s Growing AI–Neuroscience Ecosystem:

India represents a uniquely diverse environment for computational neuroscience due to its linguistic and cultural diversity. With more than 22 scheduled languages and hundreds of dialects, it provides a real-world testbed for evaluating how well computational systems generalize across heterogeneous conditions — especially important for low-resource languages, where limited datasets and high variability make standard approaches challenging (Joshi et al., 2020; Bender & Friedman, 2018).

Major Research Institutions Driving Brain–Computation Science in India

India’s brain–computation ecosystem integrates across academia, industry, and government initiatives, including the India Semiconductor Mission, to support advances in research, computational infrastructure, and large-scale translational innovation across neuroscience and artificial intelligence.

1. The Engineering Powerhouses (IIT Madras & IISc)

IIT Madras: A notable hub in neurorehabilitation research, developing brain–computer interfaces (BCIs) to decode neural signals and explore their use in restoring movement in stroke and spinal cord injury patients using robotic exoskeletons.

IISc Bangalore: Active in neuromorphic hardware research, designing Brain-on-a-Chip systems that mimic neurons for improved AI energy efficiency.

2. The Biological “Data Factories” (NCBS, CALIBRE, NIMHANS, TIFR & InStem)

These institutions generate the high-quality biological data that powers AI research.

NCBS, Bangalore: Maps neural circuits using calcium imaging and electrophysiology, from fruit fly memory to zebrafish locomotion.

CALIBRE (NCBS + ICTS): Applies mathematical and physics-based frameworks to large-scale biological datasets, finding patterns across complex brain phenomena.

NIMHANS, Bangalore: Combines clinical neuroscience with one of India’s longest-running Human Brain Tissue Repositories (est. ~1995), which collects and preserves post-mortem brain tissue for research on neurodegenerative and psychiatric diseases (Shankar et al., 2013). Using fMRI and EEG, researchers study conditions from schizophrenia to epilepsy

TIFR, Mumbai: Bridges quantitative physics and biology, contributing theoretical rigor to systems neuroscience.

InStem, Bangalore: Uses stem cell-derived human neurons to model neurological disease, providing data that animal models alone cannot (Sachitanand, 2009; Institute for Stem Cell Science and Regenerative Medicine, 2009).

Rohini Nilekani Centre for Brain and Mind (CBM): A 2023 collaborative initiative between NCBS, NIMHANS, and partner institutions, harnessing human genetics, stem cell biology, and neuroimaging to decode the biology of mental illness.

3. The Specialized Modelers (NBRC, IISERs & BIT Mesra)

NBRC (National Brain Research Centre), Manesar: India’s only institute dedicated exclusively to neuroscience research and education (est. 1997, under the Department of Biotechnology).

NBRC has developed India-specific AI neuroimaging tools including BRAHMA (India’s first high-resolution brain MRI template for the Indian population), GAURI (a predictive AI diagnostic tool for Alzheimer’s and Parkinson’s disease) and BHARAT (a big-data fMRI framework for early AD detection) (in https://en.wikipedia.org/wiki/NationalBrainResearchCentre).

IISERs: Emphasize interdisciplinary training across biology, mathematics, physics, and computation, building the next generation of researchers who can operate across the brain–computation interface.

BIT Mesra: Applies machine learning and computational methods to biomedical imaging and clinical hospital data

Industry and Translational Ecosystem

Major organizations, including TCS Research, Infosys, Wipro AI Labs, IBM Research India, Microsoft Research India, and Google Research India are advancing healthcare systems, language technologies, automation, and large-scale data platforms, strengthening the computational infrastructure that brain and biomedical research depend on.

On the startup side

Niramai (Bangalore, est. 2016) uses AI-powered thermal imaging (Thermalytix) for non-invasive, radiation-free early breast cancer screening, clinically validated in a multicenter trial (BMJ Open, 2021) and a real-world study of 300,000+ women (npj Digital Medicine, Adapa et al., 2025). Qure.ai (Mumbai) applies deep learning to radiology, including chest X-ray interpretation for tuberculosis and stroke detection from CT scans. SigTuple (Bangalore) uses AI and microscopy automation to analyze blood smears and pathology samples, bringing computational diagnostics to clinical laboratories.

Semiconductor Foundations

Semiconductors form the physical foundation of modern computational systems, enabling large-scale training and inference for deep learning and multimodal models (Hennessy & Patterson, 2019; Sze et al., 2017). They also accelerate AI because they are the hardware that performs all underlying computations; as chip technology advances, it enables greater parallel processing, allowing AI models to train faster, respond more efficiently, and consume less energy.

In this context, the India Semiconductor Mission aims to strengthen domestic capabilities in chip design, fabrication, and packaging under the Ministry of Electronics and Information Technology (MeitY). Backed by an incentive program of approximately USD 10 billion (₹76,000 crore), this initiative seeks to build a robust semiconductor ecosystem in India. These advancements are likely to significantly enhance future capabilities in artificial intelligence and computational neuroscience, while also reducing dependence on imported semiconductor technologies.

A Unified Ecosystem

Neuroscience inspires computational models, computational advances accelerate neuroscience research, and semiconductor technologies provide the underlying hardware that enables both (Sze et al., 2017). Together, these domains create a system in which intelligence and computation are constantly interacting. This convergence is laying the foundation for India to emerge as a future hub for the integrated advancement of brain science, computing, and hardware innovation. What connects all of it is computation: tasks that once required years — brain image analysis, protein structure prediction — can now be performed in hours.

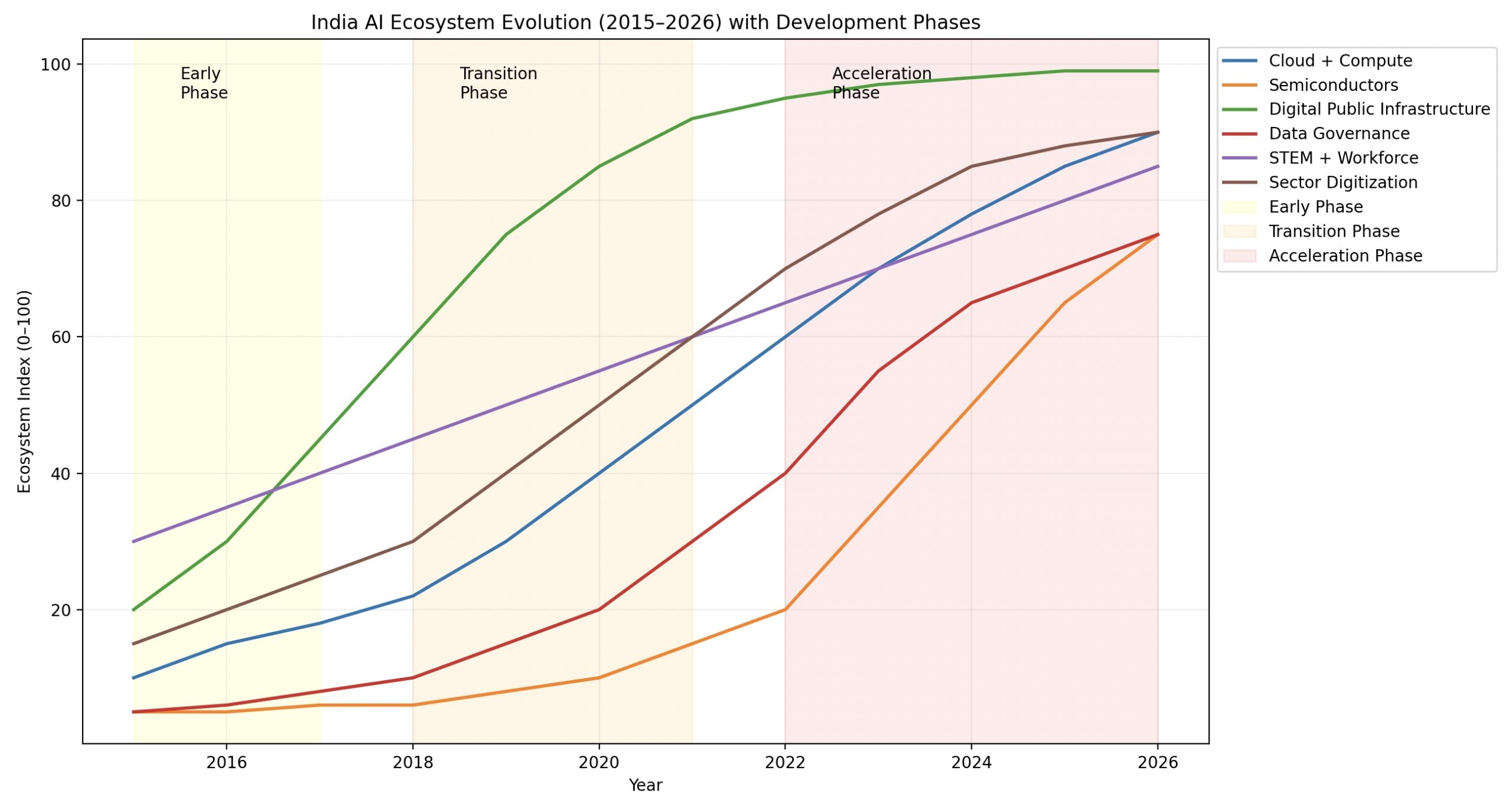

Fig. 1. India AI ecosystem maturity evolution (2015–2026). Normalized index (0–100) shows the growth of Digital Public Infrastructure, data systems, AI capability, funding, and industry adoption from early-stage emergence to large-scale integration.

Acknowledgement: Thanks to Bikramaditya Mandal, Ph.D, Post-Doc, University of Nevada, Las Vegas, USA , for sharing his valuable insights and proofreading of the article.

(P.S. This blog was crafted with a little AI assistance — no neurons were harmed in the making!)

Key Sources

[1] D. O. Hebb, The Organization of Behavior. Wiley, 1949.

[2] C. J. Shatz, “The developing brain,” Scientific American, vol. 267, no. 3, pp. 60–67, 1992.

[3] W. Schultz, P. Dayan, and P. R. Montague, “A neural substrate of prediction and reward,” Science, vol. 275, pp. 1593–1599, 1997.

[4] E. R. Kandel et al., Principles of Neural Science, 5th ed. McGraw-Hill, 2013.

[5] O. Sporns, Networks of the Brain. MIT Press, 2010.

[6] D. E. Rumelhart, G. E. Hinton, and R. J. Williams, “Learning representations by back-propagating errors,” Nature, vol. 323, pp. 533–536, 1986.

[7] D. Attwell and S. B. Laughlin, “An energy budget for signaling in the grey matter of the brain,” J. Cereb. Blood Flow Metab., vol. 21, no. 10, pp. 1133–1145, 2001.

[8] J. Jumper et al., “Highly accurate protein structure prediction with AlphaFold,” Nature, vol. 596, pp. 583–589, 2021.

[9] K. Tunyasuvunakool et al., “Highly accurate protein structure prediction for the human proteome,” Nature, vol. 596, pp. 590–596, 2021.

[10] G. Indiveri et al., “Neuromorphic silicon neuron circuits,” Front. Neurosci., vol. 5, p. 73, 2011.

[11] P. Joshi et al., “The state and fate of linguistic diversity in NLP,” in Proc. ACL, 2020.

[12] E. M. Bender and B. Friedman, “Data statements for NLP,” Trans. Assoc. Comput. Linguist., vol. 6, pp. 587–604, 2018.

[13] J. L. Hennessy and D. A. Patterson, Computer Architecture: A Quantitative Approach, 6th ed. Morgan Kaufmann, 2019.

[14] V. Sze et al., “Efficient processing of deep neural networks,” Proc. IEEE, vol. 105, no. 12, pp. 2295–2329, 2017.

[15] S. K. Shankar et al., NIMHANS brain tissue repository, 2013.

[16] R. Sachitanand, InStem institutional report, 2009.

[17] IIT Madras, Brain–Computer Interface Initiative.

[18] National Centre for Biological Sciences, CBM.

[19] National Brain Research Centre, India.

[20] BMJ Open, “Niramai breast cancer screening study,” 2021.

[21] S. R. Adapa et al., “AI-based digital medicine evaluation,” npj Digital Medicine, 2025.

[22] Ministry of Electronics and IT, Government of India, India Semiconductor Mission.